|

|

1.IntroductionProbability hypothesis density (PHD) filter-based1 trackers have enjoyed growing popularity in recent years, particularly in the field of nonlinear non-Gaussian multitarget visual tracking. The original PHD filter-based visual tracker usually uses outputs of detectors, such as a motion detector to establish the observation model, whose efficiency relies on the accuracy of the detection.2 In addition, due to the potential nonlinearity and non-Gaussianity of target models in most visual trackers, a particle PHD filter3 is used to implement the PHD recursion. However, the intersections of multiple targets like occlusion and clutter often lead to the complex multimodality distribution of the resampled particles, which obviously increase the complexity of state extraction. The classical k-means clustering algorithm may present serious degradation in state extraction performance. In this paper, to avoid inaccurate detection generating estimation errors in the original PHD filter-based visual tracker,2 color histogram with position constraints4 is incorporated into the PHD filtering framework, which combines the appearance model of the target with its temporal dynamics in a unifying framework. Moreover, to obtain more accurate state estimates, a new state extraction method based on Gaussian mixture model (GMM) clustering is proposed. Hence, a robust visual tracking framework is obtained. The multitarget visual tracking problem can be formulated as multitarget Bayes filter in a random finite set (RFS) framework by propagating the multiple-target posterior in time. The particle PHD filter3 is a sequential Monte Carlo implementation for the multitarget Bayes filter, which approximates the PHD with a set of random samples (weighted particles). The particle PHD filter involves prediction and update steps. Let posterior PHD at time k − 1 be approximated by [TeX:] $\{ w_{k - 1}^{(i)},x_{k - 1}^{(i)} \} _{i = 1}^{L_{k - 1} }$ of Lk − 1 particles and their corresponding weights. The predicted PHD vk|k − 1(xk) can be approximated by [TeX:] $\{ \tilde w_{k|k - 1}^{(i)},\tilde x_k^{(i)} \} _{i = 1}^{L_{k - 1} + J_k }$ after applying importance sampling below Eq. 1[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} v_{k|k - 1} (x_k) = \sum\limits_{i = 1}^{L_{k - 1} + J_k } {\tilde w_{k|k - 1}^{(i)} \delta _{\tilde x_k^{(i)} } } (x_k), \end{equation}\end{document}Eq. 2[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{eqnarray} \displaystyle\tilde w_{_{k|k - 1} }^{(i)} = \! \left\{ {\begin{array}{*{20}c} {\displaystyle\frac{{\phi _{k|k - 1} \big(\tilde x_k^{(i)},x_{k - 1}^{(i)} \big)w_{k - 1}^{(i)} }}{{q_k \big(\tilde x_k^{(i)} |x_{k - 1}^{(i)},Z_k \big)}},} & {i = 1, \cdots,L_{k - 1} } \\ [-4pt] \\ {\displaystyle\frac{{\gamma _k \big(\tilde x_k^{(i)}\big)}}{{J_k p_k \big(\tilde x_k^{(i)} |Z_k \big)}}{\rm,}} & \!\!\!\!\!\!{i \!=\! L_{k - 1} \!+ \! 1, \cdots,L_{k - 1} \!+ \! J_k } \\ \end{array}} \right.\!\!\!.\hspace{-10pt}\nonumber\\ \end{eqnarray}\end{document}Eq. 3[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} \tilde w_k^{(i)} = \left[ {P_M (\tilde x^{(i)}) + \sum\limits_{z_k \in Z_k } {\frac{{P_D \big(\tilde x_k^{(i)} \big) P \big(z_k |\tilde x_k^{(i)} \big)}}{{\kappa _k (z) + C_k (z)}}} } \right]\tilde w_{_{k|k - 1} }^{(i)}, \end{equation}\end{document}2.Tracking ModelIn the proposed tracker, the target candidate in an image is approximated with a w × h rectangle. Let the state of a target at time k be [TeX:] $x_k = (p_{x,k},\dot p_{x,k},p_{y,k},\dot p_{y,k},w,h)^T$ with the centriod pk = (px, k, py, k) and the target speed. Assume that each target follows a linear Gaussian constant velocity model, i.e., Eq. 4[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} x_k = {\bf F}x_{k - 1} + v_k, \end{equation}\end{document}To incorporate the appearance model into the tracking framework, we design the observation model by a color histogram.4 Let [TeX:] $\{ {\bf s}_i \} _{{i}= 1 \cdots {n _{h }}}$ be the pixel locations of the target centered at pk = (px, k, py, k), and the window radius be h = (w, h). Define a function [TeX:] ${\mathop{\rm b}\nolimits}:R^2 \to \{ 1 \cdots m\}$ associating the pixel at location si to the index [TeX:] ${\mathop{\rm b}\nolimits} ({\bf s}_i)$ of the histogram bin corresponding to the color of that pixel. The color histogram of a target candidate [TeX:] ${\bf \hat q}({\bf p}_k)$ and the probability of the feature u = 1, ⋅⋅⋅, m are defined by Eqs. 5, 6, Eq. 5[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} {\bf \hat q}({\bf p}_k) = \{ \hat q^{(u)} ({\bf p}_k)\} _{u = 1, \cdots,m},\sum\limits_{u = 1}^m {\hat q^{(u)} ({\bf p}_k)} = 1, \end{equation}\end{document}Eq. 6[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} \hat q^{(u)} ({\bf p}_k) = C_h \sum\limits_{i = 1}^{n_h } {\mathop{\rm k}\nolimits} \left(\left\| {\frac{{{\bf p}_k - {\bf s}_i }}{{\bf h}}} \right\|\right)\delta [{\mathop{b}\nolimits} ({\bf s}_i) - u], \end{equation}\end{document}Eq. 7[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} p(z_k |x_k) = \frac{1}{{\sqrt {2\pi } \sigma _c }}\exp \left\{ { - \frac{{d^2 ({\bf \hat q}({\bf p}_k),{\bf \hat q}_c)}}{{2\sigma _c^2 }}} \right\}, \end{equation}\end{document}3.GMM ClusteringIn the particle PHD filter, a clustering algorithm is required to detect the peaks of the PHD defining candidate states of targets from the resampled particles. We propose a GMM clustering method for state extraction. First, GMM is used to fit the underlying distribution of a resampled particle xk as Eq. 8[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{equation} S_k (x_k |\Theta _k) = \sum\limits_{l = 1}^{G_k } {\pi _k^l } {\cal N} \big(x_k |\mu _k^l,\Sigma _k^l \big){\rm with }\sum\limits_{l = 1}^{G_k } {\pi _k^l = 1}, \end{equation}\end{document}Eq. 9[TeX:] \documentclass[12pt]{minimal}\begin{document}\begin{eqnarray} S_k (\tilde X_k |\Theta _k) = \prod\limits_{i = 1}^{L_k } {S_k \big(x_k^{(i)} |\Theta _k \big)} = \prod\limits_{i = 1}^{L_k } {\sum\limits_{l = 1}^{G_k } {\pi _k^l } {\cal N} \big(x_k^{(i)} |\mu _k^l,\Sigma _k^l \big)}.\nonumber\\ \end{eqnarray}\end{document}4.Particle PHD Filter-Based Visual Tracker with Robust State ExtractionWhen tracking starts, the target's initial state RFS is input into the proposed algorithm and extract reference models of targets using Eq. 5 at time k = 0. Then the tracking starts from time k ⩾ 1 as follows.

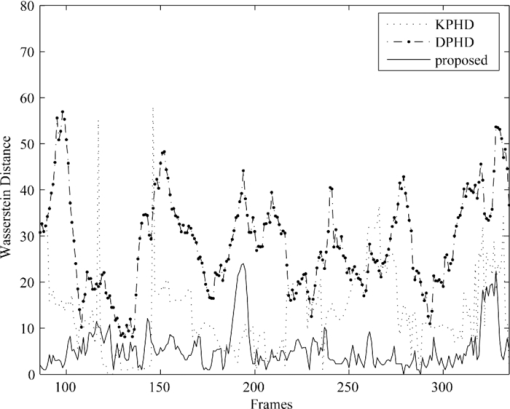

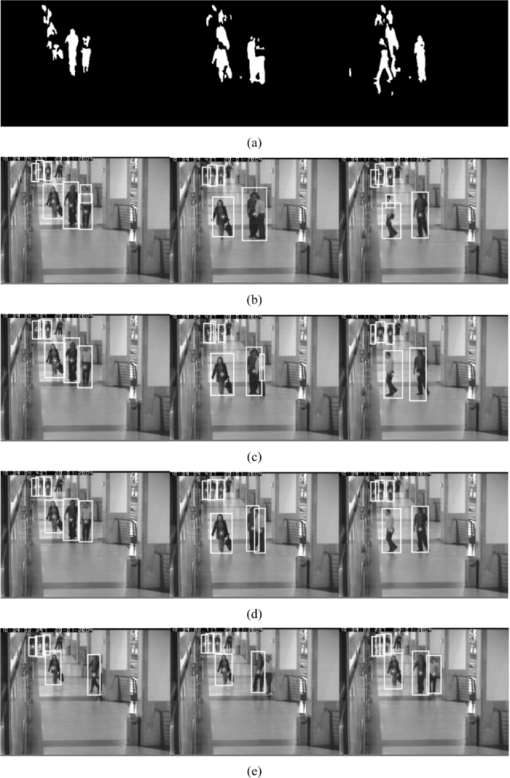

5.ResultsThe pedestrian sequence from the CAVIAR data set is used as a test video. Figure 1 indicates that PHD filter-based visual trackers can deal with a variable number of targets tracking problems without data association. Figure 1 presents the detection results by a background subtraction detector. Figure 1 shows the particle PHD filter directly using detection results as measurements (denoted as DPHD) would like to generate some false state estimates due to inaccurate detection such as person detection splitting into several blobs. Figure 1 shows the particle PHD filter with observation likelihood based on color histogram and K-means clustering (denoted as KPHD) can avoid failures due to inaccurate detection but output state estimates without satisfying accuracy. Figure 1 demonstrates that more accurate state estimates can be filtered and extracted effectively by our method. Figure 1 shows an example of a slower response of the proposed tracker due to color histogram variation of the candidate target region suffering occlusion. Moreover, it can be derived that the appearance variation of targets due to illumination change and occlusion, as well as regions of background with similar color histograms to targets would mislead the tracker using color histograms only. To improve the tracker additional information is needed. Fig. 1Detecting and tracking results of frames 155, 280, and 330 and an example of failure modality in frames 104, 113, and 125: (a) detection results of background subtraction; (b) tracking by DPHD; (c) tracking by KPHD; (d) the proposed method; (e) an example of failures of the proposed method.  The Wasserstein distance5 is introduced here to evaluate the performance of trackers. In Fig. 2, the comparison of Wasserstein distance of the three trackers is provided and it demonstrates that our tracker is the best. 6.Conclusions and DiscussionIn this paper, we have presented a robust multitarget visual tracking framework based on the PHD filter which stabilizes the tracker by incorporating color histograms of targets and their temporal dynamics in a unifying framework and improving the accuracy of state extraction using the proposed GMM clustering method. Experiments show the proposed framework can effectively track a varying number of targets with more accurate state estimates. Possible topics of future work include the incorporation of brightness gradient into the appearance model for more robust observation likelihood and the development of a more efficient particle clustering method. AcknowledgmentsThis paper is jointly supported by the National Natural Science Foundation of China ( 61074106) and China Aviation Science Foundation ( 2009ZC57003). ReferencesR. Mahler,

“Multitarget filtering using a multitarget first-order moment statistic,”

Proc. SPIE, 4380 184

–195

(2001). https://doi.org/10.1117/12.436947 Google Scholar

E. Maggio, M. Taj, and A. Cavallaro,

“Efficient multitarget visual tracking using random finite sets,”

IEEE Trans. Circuits Syst. Video Technol., 18

(8), 1016

–1027

(2008). https://doi.org/10.1109/TCSVT.2008.928221 Google Scholar

B. N. Vo, S. Singh, and A. Doucet,

“Sequential Monte Carlo implementation of the PHD filter for multi-target tracking,”

792

–799

(2003). Google Scholar

D. Comaniciu, V. Ramesh, and P. Meer,

“Real-time tracking of non-rigid objects using mean shift,”

142

–149

(2000). Google Scholar

B. N. Vo and W. K. Ma,

“The Gaussian mixture probability hypothesis density filter,”

IEEE Trans. Signal Process., 54

(11), 4091

–4104

(2006). https://doi.org/10.1109/TSP.2006.881190 Google Scholar

|