|

|

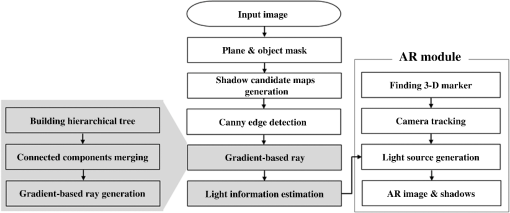

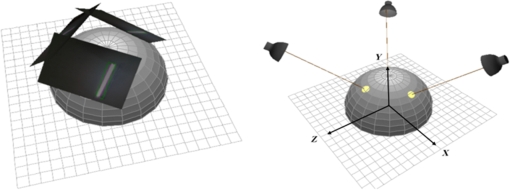

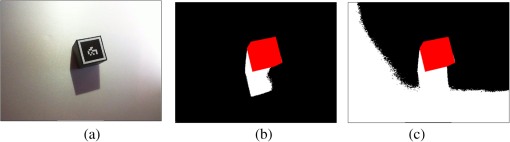

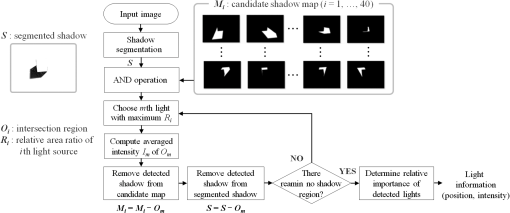

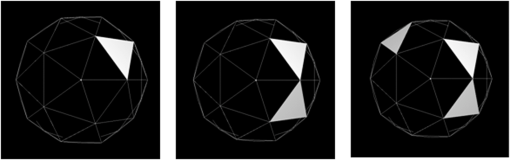

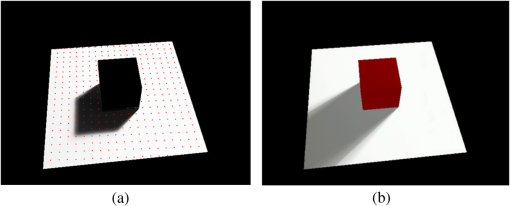

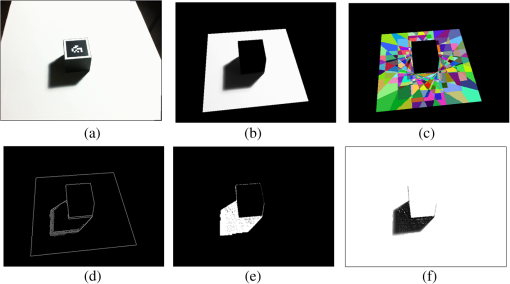

1.IntroductionAugmented reality (AR) allows users to virtually interact with their surroundings.1 With respect to the registration process that overlays computer-generated information onto the real world, previous studies have mainly investigated the possibilities to produce an augmented world that is as realistic as possible.2–8 In other words, the optical consistency between the real world and virtual objects is one of the main topics for AR. Naemura mentioned that the consistency of the geometry, time (synchronized world to facilitate a smooth interaction), and illumination are important in AR applications.9 The distribution of the real light sources must be known in order to have the virtual objects cast convincing shadows that match those already present in the real scene.10 Without shadows, virtual objects appear as if they were floating, thus, the environment will look unrealistic. The goal of this paper is to estimate the distribution of the illumination in a scene and then to generate a virtual shadow that improves the visual perception and realism of an AR application. Many studies on illumination estimation have described generating realistic images that reflect the distribution of the environmental illumination.2,4–8 Agusanto et al. introduced an image-based lighting method that uses a light probe, such as a metallic sphere.2 Havran et al. generated temporally coherent illumination with a light probe by using a high dynamic range camcorder.4 Arief et al. introduced an illumination direction estimation algorithm for a mobile AR system.7 They used shadow contour detection and tracing to extract the shadow region generated by the cubic reference object. Then the shape of the shadow contour was analyzed to recognize the outline points corresponding to the shape of the geometry of the reference object. As a result, this method can only determine the direction of one single strong light source because it extracts salient contours from the shadowed region. In the real world, many light sources produce shadow boundaries that are indistinct, so this method cannot conduct a precise analysis of multiple shadows produced by various light sources. We estimate the illumination distribution of a scene from its image brightness inside of the shadows cast by an object of a known shape and size. The proposed method is most similar to the method of Sato et al.5 It solves a linear equation that is defined with pixel values, scene parameters and unknown illumination radiance by using a linear least-squares algorithm. Since this method is based on point sampling in the shadow regions, its performance is highly affected by the number of sample points and their respective positions. To overcome this instability problem, Sato et al. partitioned a shadow surface around a reference point into clusters and selected one pixel from each of those clusters.6 Since many shadow regions overlap, it is difficult to determine the sample points that are evenly distributed, and as a result, the subsequent numerical computation is unstable. The shadows in an image provide important information of the scene in terms of the cues of the shape and position of an object as well as the characteristics of the light sources. Many studies on shadow detection have been performed up to now. Finlayson et al. proposed a set of illumination-invariant features to detect and remove shadows from a single image.11 However, this method is applicable to a shadow image with relatively distinct boundaries. Panagopoulos et al. estimated the illumination environment and detected shadows by using a coarse three-dimensional (3-D) model and textured surfaces from a single image.12 The illumination parameters of the mixture model are estimated using the expectation and maximization (EM) framework. This method is insensitive to the accuracy of the 3-D model in describing the scene geometry and it is able to infer the shadow information from the textured surfaces. However, it takes 3 to 5 min to estimate the illumination parameters from an input image because several EM iterations are performed. Jiang et al. used color and illumination features to present a shadow detection method in natural scenes with complicated scene elements.13 This method extracts an intrinsic image for which the illumination and reflectance components can be separated. The resulting illumination maps contain robust features of the intensity change at the shadow boundaries. Then the features from the illumination maps and color segmentation results are combined. These features are used as inputs for an AdaBoost-based decision tree to detect the shadow edges. As a result, the performance of the shadow detection depends on how well the decision method has been trained for a set of labeled images. To achieve a seamless integration of the synthetic object within a given image, the proposed method estimates the illumination distribution surrounding a virtual object and generates realistic virtual shadows. In this paper, we introduce a robust shadow segmentation method by using a Canny edge detector and gradient-based ray. We also present an efficient illumination estimation by using an intersection operation between the candidate shadow maps and the segmented shadow. For further details, the proposed method employs a gradient-based ray to extract shadow regions that were cast with a 3-D AR marker of a known shape and size. The idea of using a gradient-based ray to detect the region of interest has been recently introduced in the stroke width transform (SWT) operator.14 SWT is a local image operator that computes the width per pixel of the most likely stroke containing the pixel. Epshtein et al. defined a stroke to be a contiguous part of an image that forms a band of nearly constant width within some intensity range.14 Since text has high variability both in geometry and appearance, further considerations such as postfiltering and an aggregation step should be included. Since the shape of the shadow is much simpler than the shape of the text, in general, we can efficiently segment the shadow regions by using the gradient-based ray without further postprocessing. In this paper, both the candidate shadow maps and the segmented shadows are used to extract the illumination information from the shadow image. The illumination distribution that is obtained is then used to generate realistic rendered images with multiple shadows. 2.Proposed Method2.1.Construction of the 3-D AR SpaceThe image forming process is a function of three components: the 3-D geometry of the scene, the reflectance of the different surfaces in it, and the distribution of the lights around it. This paper focuses on estimating the illumination information of the environment. The proposed method extracts the shadow regions from a real scene and estimates the information of the light (its direction and intensity) with candidate shadow maps. The shaded boxes in Fig. 1 represent the main components of our contribution. The 3-D synthetic space is constructed in a spherical coordinate system, and its origin is positioned at the center of the AR marker, as shown in Fig. 2. Here, a 3-D AR marker of a known shape and size is employed. We assume that the light sources are distant, so the illumination can be approximated as a mixture of the light distribution on a unit sphere for the light direction.5 In 3-D AR space, every light source that illuminates the scene is placed on a centroid of each polygon of a geodesic dome. The location of the light source is represented with its azimuth and elevation in a spherical coordinate system. Shadows are caused by the occlusion of the incoming light in a scene. In general, the image brightness inside of the shadows has a great potential to provide clues about the distribution of the environmental illumination. In this paper, the shadow region that was generated by both the 3-D AR marker and the light source on a geodesic dome is clustered into a candidate shadow map, as in Ref. 5. The ARToolkit module is used by the proposed method to detect the AR marker in an input image and to estimate the camera parameters (including translation and rotation information).15 Environmental illumination is used to determine the direction and intensity of the light source during the rendering process, and the proposed method can, therefore, generate a realistic shadow image to reflect the illumination of the surrounding environment in real time. 2.2.Gradient-Based Ray for Shadow SegmentationArief et al. extracted shadow contours to estimate the direction of the illumination under the assumption that a single dominant light source is present in a scene.7 Although salient shadow boundaries are present in the input image, the detection performance for the shadow regions depends highly on the threshold values, as seen in Fig. 3. Here a virtual object generated according to the relationship between the AR marker and the camera system is colored in red. Previous pixel-based methods that examine the shadow intensity are sensitive to the number of sampling points and their positions. In addition, it is difficult to determine the optimal threshold value for the shadow detection.5,7 By using the ARToolkit module, we can produce viewpoint tracking and virtual object interaction.15 For further details, real camera position and orientation relative to a square physical marker are calculated in real time. A size-known square is used as a base of the coordinates frame in which virtual computer graphics objects are generated. In this paper, the marker is on a cubic reference object that occludes the incoming light in a scene. The transformation matrix from the marker coordinate to the camera coordinate is estimated. Once the real camera position is known, a virtual object with the same shape and size as the real marker can be overlaid exactly on the real marker. This involves using a mask image to filter out both the 3-D marker object and the meaningless background regions, as shown Fig. 4(b). Then the candidate shadow maps are obtained on the shadow surface. For further details, each of the candidate shadows generated by both the 3-D AR marker and the light source on a geodesic dome are partitioned into a candidate shadow map, which is represented with a unique color, as in Fig. 4(c). Fig. 4(a) Input image, (b) masked image, (c) candidate shadow maps on shadow surface, (d) edged image, (e) filled regions with gradient based ray, and (f) intersection region between (b) and (e).  We obtain edge components of shadows by using a Canny edge detector instead of directly extracting shadow regions by using a global threshold method. Because the Canny edge detector employs double threshold values and tracking by hysteresis, it is able to determine a wide intensity range of edges. This means we can solve the limitations of a global threshold value method to a certain degree. In addition, by using the gradient-based ray and topological structure of the connected components, we can greatly reduce the number of unwanted effects due to the threshold value of the edge detector. The components connected in the edge map are extracted with labeling, then we generate a bounding box (minimum bounding rectangle) for each component. Both range and position of the bounding box are saved as node information of a tree structure. By examining the inclusion relation of the components, we can construct a hierarchical tree of the connected components which represents their topological relations. For example, if the bounding box of one component is included in that of another component, the smaller component is stored as a child node of the larger component. By setting a threshold value of inclusion relation of the components (how much two bounding boxes are overlapped), we can determine the tree structure level. Then components of the same tree structure are merged and we remove the too small components relative to candidate shadow maps. The gradient direction of each edge pixel is considered. Here the Sobel operator is applied to the mask image [Fig. 4(b)] for the gradient information at pixel . If lies on a shadow boundary, is roughly perpendicular to the orientation of the shadow edge. The proposed method defines a gradient-based ray with a parametric form: , where is the spatial parameter (). We follow the ray until another shadow pixel is found. Figure 4(e) shows the regions filled with the gradient-based ray. An intersecting region is computed between the masked image [Fig. 4(b)] and the filled regions [Fig. 4(e)], and we obtain the segmented shadow region efficiently, as in Fig. 4(f). 2.3.Using Candidate Shadow Maps for Illumination EstimationWe use a simple logical operation to obtain an area of the intersection region between the segmented shadow and the candidate shadow map by the ’th light source. Here is obtained in the shadow segmentation stage (Sec. 2.2), and is generated by using both the 3-D AR marker and the light source on a geodesic dome in the 3-D AR space construction stage. Table 1 shows pseudo code for the light source estimation. Figure 5 shows a diagram of the illumination estimation process with candidate shadow maps. In Table 1, we compute the first term, which represents the area of divided by the total area of , which means the relative contribution of the ’th light source to the shadow surface. Here the function computes the total area of the region of interest. Then we compute the second ratio as the area of divided by that of each candidate shadow map . The only light source for which the second ratio is is considered to be a significant light source, which means that the candidate map by the ’th light source has been sufficiently shadowed. By multiplying the two area ratios, we obtain a relative contribution of the ’th light source, which is used as a robust measure to determine whether the candidate shadow for each light source on the geodesic dome appears in the segmented shadow region. By comparing the of all candidate shadow maps (), we can choose the candidate shadow map of the maximum value . The ’th light source is chosen as the most significant light source in this step, and the intensity information of the ’th light source is computed with an averaged intensity of all pixels in . The shadow region of the light source with the most contribution is removed not only from the segmented shadow, but also the candidate shadow maps by using an iterative loop ( and ). This process continues until no shadow regions remain. Table 1Pseudo code for the light source estimation.

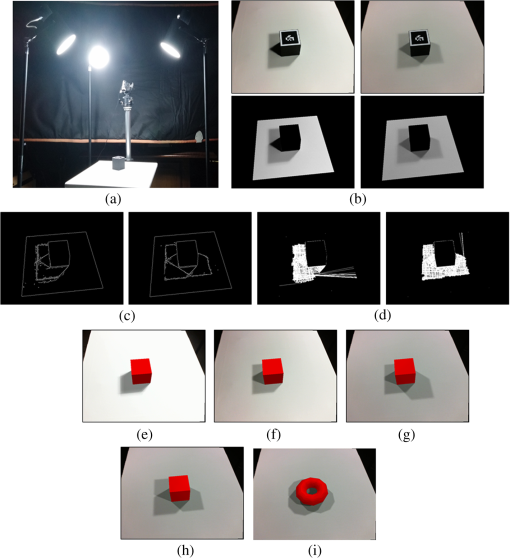

If the user captures a single photograph, some of the cluster regions that are completely or highly partially occluded by the 3-D AR marker are excluded. When the camera is far from the shadow surface, some of the candidate shadow maps would have areas that are too small to measure their presence in the real shadow region with precision. By considering the distance of the camera and the shadow surface, we exclude the candidate maps with too small an area in the illumination estimation. So we have a threshold value to determine whether a candidate shadow map is worth considering. Here, this threshold value is set to 400 pixels ( region). For the rendering technique, an area light source, such as an incandescent lamp, is generated with multiple point light sources. The light sources detected on the polygons of the geodesic dome are subsampled to effectively represent area lights in the real world. By dividing the intensity of the ’th light source with a total sum of detected light sources, we can compute the relative intensity values of the ’th light. Finally, we can determine the number of sample points of the light sources that were detected according to their relative contributions to the segmented shadow regions. The illumination distribution estimated from the real scene is used to generate realistic shadows in real time. 3.Experimental ResultsThe experimental equipment consists of a PC with 3.4 GHz CPU. Figure 6(a) shows the experimental setup used to conduct an accurate evaluation of the estimated light source information. The directions of three light sources (in elevation angle and azimuth angle in a spherical coordinate system) are manually measured with the marker’s center as ground truth. In general, many real light sources such as light bulbs and fluorescent lights have some volumes. So the estimated light sources’ locations are represented with some angular diameters (radians) on a geodesic dome. In our experiment, the real light sources are considered as a sphere with a radius of 5 cm. The illumination environment is covered with a unit sphere that is represented with a geodesic dome consisting of 80 triangles. Since the light sources under the surface of the shadow cannot contribute to the shadow generation, the proposed method only examines 40 triangles of the geodesic dome, and the estimated light sources are positioned on the triangles of the geodesic dome. If the number of faces for the geodesic dome is increased, we can generate more realistic virtual shadows. However, we would have to examine more candidate shadow maps. Fig. 6(a) Experimental setup; (b) input images with two and three light sources and masked images; (c) their edge images; (d) segmented regions with gradient-based ray; (e), (f), and (g) rendering images of virtual cube object with 40 candidate lights in three cases (one, two, and three light sources); (h) and (i) rendering images of virtual cube and torus with 160 candidate lights in the third case.  In order to evaluate the performance of the proposed method, we estimate the illumination information for three cases according to the number of light sources (one, two, and three). Table 2 shows that the light directions that are estimated are close to the directions measured for the real light sources. Here, the light directions that are estimated are represented with angular ranges of the triangles of the geodesic dome. Figures 6(c) and 6(d) show the edge images by the Canny edge detector and the segmented regions with a gradient-based ray. As shown in Fig. 6(c), multiple indistinct boundaries may be detected on the surface of the shadow. Figure 7 shows Canny edge detection and gradient-based segmentation results by double threshold values. In Fig. 7, double threshold values (the edge pixel’s gradient value) in edge detection are set to three cases: 10 and 20 (first row), 30 and 60 (second row), and 150 and 300 (third row). The left and right images represent the edge images by the Canny edge detector and the segmented regions with a gradient-based ray in the three cases, respectively. Although many ambiguous edge segments were obtained in the edge images according to threshold values, the shadow maps of the light sources were detected except for the final case, resulting in 150 and 300 threshold values with two lights. From the experimental results in Fig. 7, the low threshold value and the high threshold are set to 15 to 30 and 30 to 60, respectively. The gradient-based ray and topological structure of the connected components is used by the proposed method to greatly reduce the number of unwanted effects due to the threshold value of the edge detector. In addition, although salient, closed shadow boundaries were not obtained, we can estimate the information of the illumination with precision. The real shadow distribution in the input images [Figs. 4(a) and 6(b)] is similar to those in the final rendering images [Figs. 6(e)–6(g)] with 40 light sources. Table 2Comparison of the measured and estimated light directions.

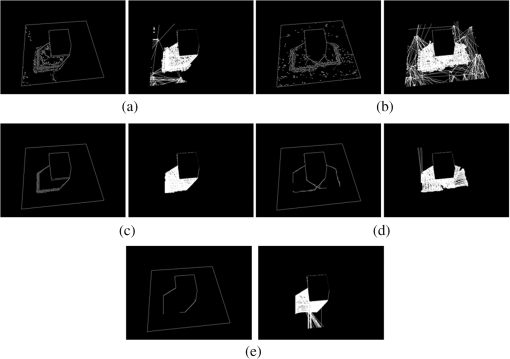

Fig. 7(a), (c), and (e) edge images by Canny edge detector (left) and segmented regions with gradient-based ray (right) in the case of one light source; (b) and (d) edge images (left) and segmented regions (right) in the case of two light sources; double threshold values are set to 10 and 20 [first row: (a) and (b)], 30 and 60 [second row: (c) and (d)], and 150 and 300 [third row: (e)].  To compare the effects of the number of light sources, the geodesic dome of 40 polygons is subdivided into that of 160 polygons. In this paper, every candidate light source has one shadow map on the shadow surface, and by considering more candidate light sources, we can generate more realistic shadows. When comparing Figs. 6(g) and 6(h), the image rendered with 160 lights is closer to the input image [the right of Fig. 6(b)] than that rendered with 40 lights. In addition, we can efficiently estimate the illumination information within 0.5 s by using the candidate shadow maps. The reason for this is that the relative contribution of every candidate light source is computed with a simple logical operation of the candidate shadow map and the segmented shadow region. The detected polygons of the geodesic dome are further subdivided, and we can generate rendered images with a salient shadow boundary and a narrow penumbra. The light sources detected in the three cases are positioned on the geodesic dome, as shown in Fig. 8. In order to generate images that are more naturally rendered, the proposed method generates 40 subsampling points among the detected light sources, considering their relative importance weights. The 15th light source has 16 subsamples and the 19th and 21st light sources have 12 subsamples in Fig. 8. To compare the proposed method against a previous method, we include experimental results obtained using the algorithm of Sato et al.5 This method formulates a linear equation relating the illumination radiance of a scene with the pixel values of the shadow image. It estimates the illumination radiance based on the sampled pixel in the shadow region. Figure 9 shows the effects of two sampling factors: the distribution of sample points and the number of sample points. Here, the red-colored dots represent the sample points of the illumination radiance estimation and some points in the 3-D marker region are excluded. In Fig. 9, the surface of the shadow is sampled regularly with 400 points. By sufficiently sampling the shadow surface, we can include enough sample points to estimate the illumination distribution of the scene. The estimated light direction in Fig. 9(b) is somewhat similar to the input light direction, as in Fig. 9(a). However, more sample points are allocated in the shadow map by another light source instead of that by a real light. This means that the number of sample points that are assigned in a really shadowed region affects the final results of the estimation. In other words, the light source with most of the sample points in each shadow map may be determined to be a significant light source. If the shadow surface is sampled insufficiently with few sampling points, we cannot obtain shadow information about every light direction of a geodesic dome. The numerical instability of the linear equation increases because there are no sampling points in some of the shadow regions. We have to include the appropriate sample points for both the light sources occluded by the object (3-D marker) and the unoccluded lights. That means that careful sampling produces a variation in the distribution of these light sources that is to be maximized. The method of Sato et al. sometimes fails to provide a correct estimate of the illumination distribution because it is sensitive to the number of sampling points and their positions.5,6 4.ConclusionThis paper presents a method to estimate the illumination distribution of the environment in an image from a real scene by using gradient-based ray and candidate shadow maps. The gradient-based ray is employed to segment the shadows by both light sources and the 3-D AR marker of a known shape and size. The relative area ratios between the segmented shadow and the candidate shadow maps are used to extract the information of the environmental light. By using the information of the light, the proposed method can project realistic shadows of a virtual object onto a real scene. In this paper, every light source that illuminates the scene is placed on a centroid of each polygon of a geodesic dome. However, because the illumination environment in the real world consists of a number of complicated scene objects, it is difficult to accurately represent the environment with a hemisphere model. In other words, the proposed method is unable to deal with the case of the overlapping shadows that are cast: there are lights with the same azimuth and different inclination angles in a geodesic dome polygon. So the shape and size of the candidate shadow map may be different from that of the real shadow from the light source in the real world. Further studies will introduce an illumination estimation method to minimize the difference of the generated virtual shadows and the real shadows. AcknowledgmentsThis research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education, Science and Technology (No. 2013008953). ReferencesR. Azuma,

“A survey of augmented reality,”

Presence: Teleoperators Virtual Environ., 6

(4), 355

–385

(1997). Google Scholar

K. Agusanto et al.,

“Photorealistic rendering for augmented reality using environment illumination,”

in Proc. IEEE Int. Symp. on Mixed and Augmented Reality,

208

–216

(2003). Google Scholar

M. Haller,

“Photorealism or/and non-photorealism in augmented reality,”

in Proc. ACM SIGGRAPH Int. Conf. on Virtual Reality Continuum and Its Applications in Industry,

189

–196

(2004). Google Scholar

V. Havran et al.,

“Interactive system for dynamic scene lighting using captured video environment maps,”

in Proc. Eurographics Symp. on Rendering,

31

–42

(2005). Google Scholar

I. Sato, Y. Sato and K. Ikeuchi,

“Illumination from shadows,”

IEEE Trans. Pattern Anal. Mach. Intell., 25

(3), 290

–300

(2003). http://dx.doi.org/10.1109/TPAMI.2003.1182093 ITPIDJ 0162-8828 Google Scholar

I. Sato, Y. Sato and K. Ikeuchi,

“Stability issues in recovering illumination distribution from brightness in shadows,”

in Proc. of IEEE Computer Vision and Pattern Recognition,

400

–407

(2001). Google Scholar

I. Arief, S. McCallum and J. Y. Hardeberg,

“Realtime estimation of illumination direction for augmented reality on mobile devices,”

in Proc. of IS&T Color and Imaging Conf.,

111

–116

(2012). Google Scholar

Y. Jung, E. Choi and H. Hong,

“Using orientation sensor of smartphone to reconstruct environment lights in augmented reality,”

in Proc. of IEEE Int. Conf. on Consumer Electronics,

53

–54

(2014). Google Scholar

T. Naemura et al.,

“Virtual shadows—enhanced interaction in mixed reality environment,”

in Proc. of IEEE Virtual Reality,

293

–294

(2002). Google Scholar

S. Ryu et al.,

“Tangible video teleconference system using real-time image-based relighting,”

IEEE Trans. Consum. Electron., 55

(3), 1162

–1168

(2009). http://dx.doi.org/10.1109/TCE.2009.5277971 ITCEDA 0098-3063 Google Scholar

G. D. Finlayson et al.,

“On the removal of shadows from images,”

IEEE Trans. Pattern Anal. Mach. Intell., 28

(1), 59

–68

(2006). http://dx.doi.org/10.1109/TPAMI.2006.18 ITPIDJ 0162-8828 Google Scholar

A. Panagopoulos, D. Samaras and N. Paragios,

“Robust shadow and illumination estimation using a mixture model,”

in Proc. of IEEE Conf. on Computer Vision and Pattern Recognition,

651

–658

(2009). Google Scholar

X. Jiang, A. Schofield and J. Wyatt,

“Shadow detection based on colour segmentation and estimated illumination,”

in Proc. of British Machine Vision Conf.,

87.1

–87.11

(2011). Google Scholar

B. Epshtein, E. Ofek and Y. Wexler,

“Detecting text in natural scenes with stroke width transform,”

in Proc. of IEEE Computer Vision and Pattern Recognition,

2963

–2970

(2010). Google Scholar

C. Eem et al.,

“Estimating illumination distribution to generate realistic shadows in augmented reality,”

KSII Trans. Internet Inf. Syst., 9

(6), 2289

–2301

(2015). Google Scholar

BiographyChangkyoung Eem received his BS, MS, and PhD degrees in electronic engineering from Hanyang University, Korea, in 1990, 1992, and 1999, respectively. From 1995 to 2000, he worked at DACOM R&D Center. In 2000, he founded a network software company, IFeelNet Co., and worked for Bzweb Technologies as CTO from 2006 to 2009 in the United States. Since 2014, he has been an industry university cooperation professor at Chung-Ang University, Korea. His research interests include augmented reality. Iksu Kim received his BS degree from the Department of Information Security, Suwon University, Suwon, Korea, in 2014. He is currently pursuing his MS degree in the Department of Imaging Science and Arts, Graduate School of Advanced Imaging Science, Multimedia and Film (GSAIM) at Chung-Ang University, Seoul, Korea. His research interests include augmented reality and computer vision. Hyunki Hong received his BS, MS, and PhD degrees in electronic engineering from Chung-Ang University, Korea, in 1993, 1995, and 1998, respectively. From 1998 to 1999, he worked as a researcher in the Automatic Control Research Center, Seoul National University, Korea. From 2000 to 2014, he was a professor in GSAIM at Chung-Ang University. Since 2014, he has been a professor in the School of Integrative Engineering, Chung-Ang University. His research interests include augmented reality. |

||||||||||||||||||||||||||||||||||||||||||||||